How Netflix Does Data Reliability

The platforms and practices behind Netflix's reliable ML systems

I’m a sucker for a good engineering blog, so for this Sunday, I’ve decided to share some of the knowledge I’ve gained over the years about Netflix’s ML infrastructure. Complete list of references at the bottom if you’d like to read further.

Hi! I’m Hodman 👋🏿👋🏿👋🏿, and I help teams build reliable data infrastructure.

What you might have missed from this week: The Data Letter’s 2025 Cloud Cost Optimization Framework is live! An 85-page system to eliminate waste across Snowflake, BigQuery, and Redshift.

Inside: Platform-specific playbooks, 30-60-90 day implementation plans, team accountability models, monitoring frameworks, and leadership templates.

Two ways to get it:

On Gumroad for $49 OR become a paid subscriber ($5/month or $50/year) and receive this plus all future frameworks and toolkits free.

Most teams waste 20-30% of their cloud spend. This framework shows you exactly where to find it.

Netflix’s scale makes reliability non-negotiable.

Netflix operates ML systems for recommendations, adaptive streaming, and personalization across 300 million global subscribers. Maintaining reliability at this scale requires platforms for experimentation, real-time data processing, model deployment, and observability. This case study examines the infrastructure Netflix built to support these requirements.

The Foundation: Metaflow for ML Pipeline Management

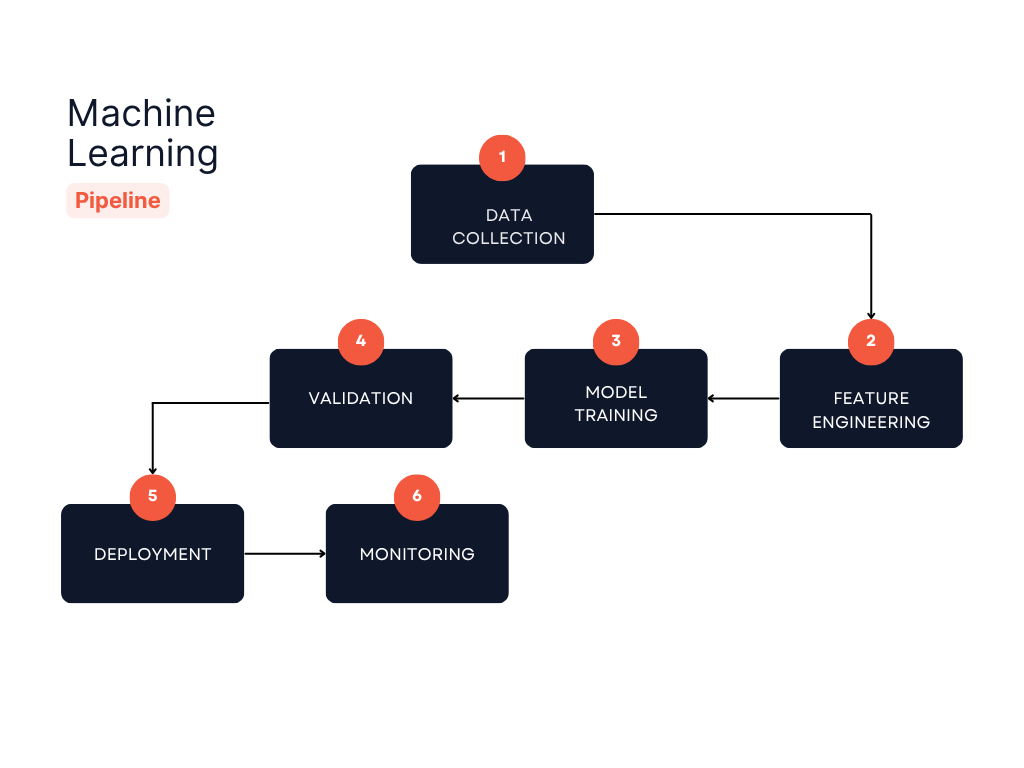

ML pipeline lifecycle at Netflix. Metaflow provides version control and artifact tracking at each stage, ensuring reproducibility from data collection through production monitoring.

Netflix uses Metaflow, an open-source framework they developed internally, to manage the ML lifecycle. Metaflow addresses what Netflix calls “the hidden 80% of effort in data science projects.” The infrastructure work has nothing to do with building models but everything to do with making them work reliably in production.

Metaflow supports over 3,000 AI and ML projects at Netflix, executing hundreds of millions of compute jobs and managing tens of petabytes of models and artifacts. The framework provides versioning, data lineage tracking, and automatic artifact storage, ensuring that every model run is reproducible and traceable.

The platform integrates with Netflix’s production orchestrator, Maestro, enabling event-triggered workflows and seamless deployment. Data scientists can develop locally, test their flows, and deploy to production with a single command. This reduces the friction between prototyping and production deployment, a critical factor in maintaining reliability.

Experimentation Infrastructure: Testing Everything

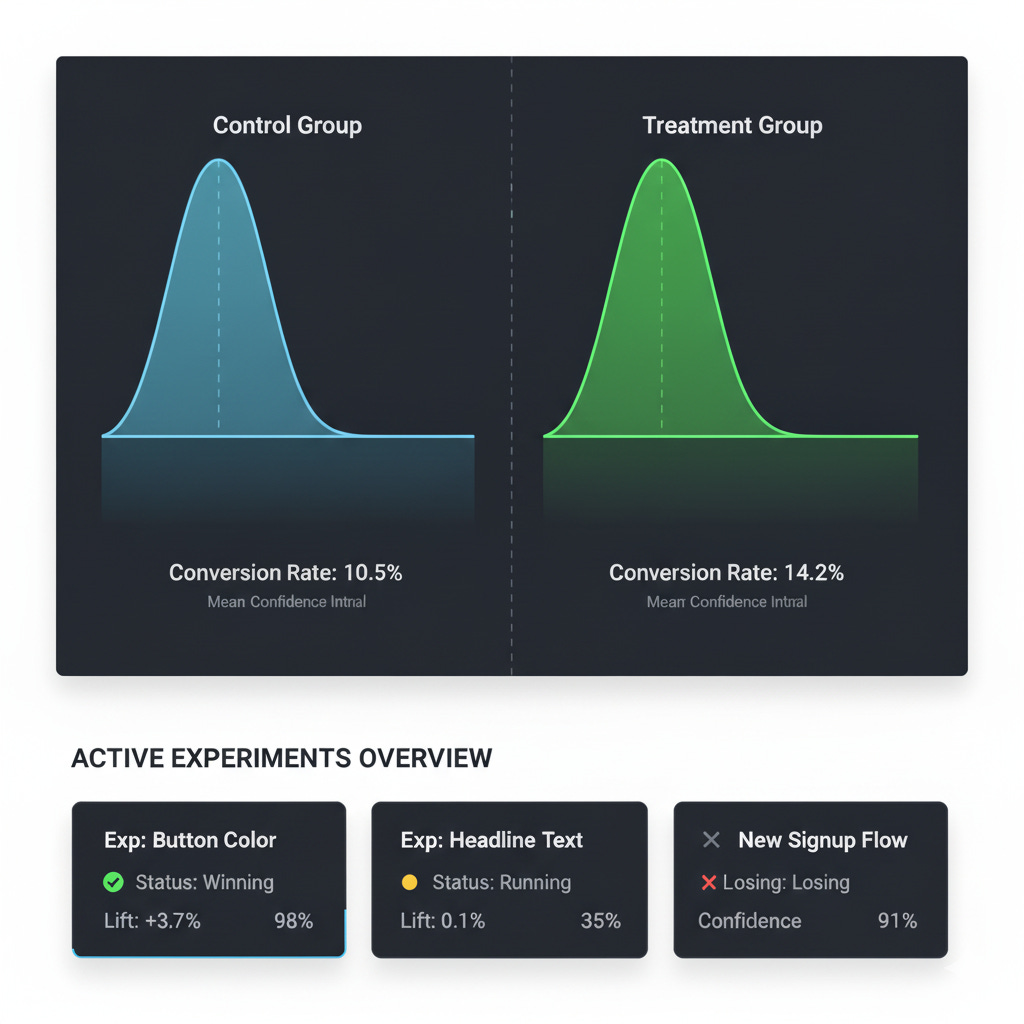

Netflix runs thousands of concurrent experiments with automated reporting and real-time metrics tracking.

Netflix tests product changes through A/B experiments before deployment. Every product change undergoes A/B testing before becoming the default user experience. From UI to redesigns to recommendation algorithm updates, Netflix runs thousands of experiments concurrently through its experimentation platform.

The platform provides fine-grained control over experiment allocation and evaluation. Netflix uses both real-time allocation for most experiments and batch allocation for mobile devices, where bandwidth constraints make real-time allocation impractical. The system handles everything from weeks-long UI tests to hours-long adaptive streaming experiments, automatically generating analysis reports and visualizations throughout the experiment lifecycle.

Netflix employs causal inference techniques alongside traditional A/B testing to isolate algorithmic changes from confounding factors. Their experimentation platform incorporates statistical rigor, providing a unified abstraction layer for arbitrary statistical models. This science-centric approach ensures that product decisions are driven by data rather than opinion.

Real-Time Data Infrastructure: Keystone and Stream Processing

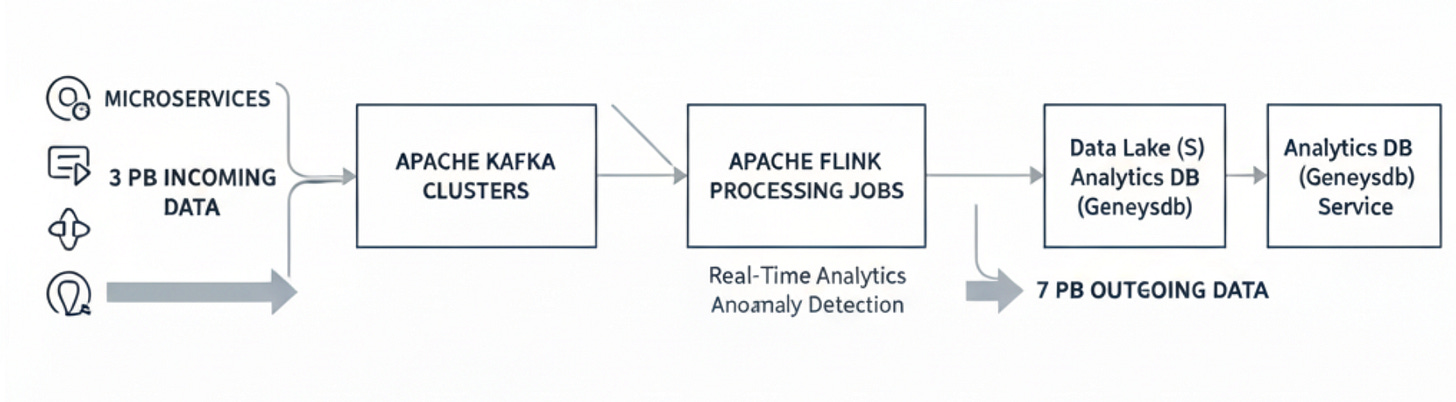

Keystone’s stream processing architecture. The platform processes trillions of events daily, routing approximately 3 petabytes of incoming data and 7 petabytes of outgoing data through 100 Kafka clusters and independent Flink processing jobs.

Keystone is Netflix’s real-time stream processing platform that handles data pipeline functionality across its systems. Keystone processes trillions of events per day, handling approximately 3 petabytes of incoming data and 7 petabytes of outgoing data. The platform operates approximately 100 Kafka clusters.

Keystone provides two core services: a routing service for data pipeline functionality and Stream Processing as a Service for custom applications. The routine service handles data collection, processing, and aggregation from all Netflix microservices i near real-time, while the service processing platform enables users to build custom stream processing applications without managing infrastructure complexity.

The platform’s design incorporates failure as a first-class citizen. Every user-declared goal state is stored in AWS RDS as the single source of truth, enabling reconstruction of failed components. Each stream processing job runs on an independent Apache Flink cluster with separate resource allocation, providing runtime isolation and preventing cascading failures.

Model Deployment and Validation

Netflix’s model deployment strategy emphasizes both reliability and flexibility. Models are packaged as Docker containers and deployed through Titus, Netflix’s container management platform. This approach ensures operational consistency with other microservices and enables rapid scaling.

The deployment pipeline integrates with A/B testing infrastructure to validate model performance before full rollout. Feature stores maintain consistent, versioned feature definitions across training and serving environments, ensuring models perform reliably when deployed. Real-time feature engineering enables immediate adaptation to changes in user behavior during active viewing sessions.

Metaflow’s integration with production systems allows data scientists to trigger deployments directly from training flows. The platform tracks the complete lineage from data to deployment, enabling rapid troubleshooting when issues arise. Branched development and deployment through Metaflow’s project isolation ensures that individual developers can iterate without interfering with production deployments.

Observability and Monitoring

Netflix monitors their systems through logs, metrics, and distributed traces. The company has built sophisticated monitoring infrastructure around logs, metrics, and distributed traces.

Atlas, Netflix’s time-series telemetry system, processes 17 billion metrics and 700 billion distributed traces per day on 1.5 petabytes of log data. The system uses streaming analytics to process queries as data is collected, enabling millions of alerts without overwhelming infrastructure. The architecture has reduced observability data processing to less than 5% of Netflix’s infrastructure costs.

For ML-specific monitoring, Netflix tracks model performance through continuous evaluation against business metrics. The platform implements automated anomaly detection to identify when model behavior deviates from expected patterns. By monitoring at source before ETL processing, Netflix catches data quality issues early, preventing downstream failures.

Causal Inference for Robust Decision Making

Beyond traditional A/B testing, Netflix employs causal machine learning to understand the incremental impact of its ML systems. This approach enables Netflix to move beyond purely associative machine learning to understanding causal mechanisms. By controlling for confounders and estimating the true incremental impact, Netflix ensures its ML systems deliver real value to members rather than just correlating with existing behavior.

Netflix uses causal inference techniques like Double Machine Learning to estimate the long-term effects of product features. This combination of experimentation and quasi-experimentation provides robust decision-making frameworks even when traditional experiments aren’t feasible.

Key Takeaways

Netflix’s implementation demonstrates several patterns in ML systems reliability:

Infrastructure First: Sophisticated algorithms require high-quality, real-time data pipelines and robust infrastructure for deployment.

Comprehensive Testing: Every change undergoes rigorous experimentation with proper statistical methodology before production deployment.

Failure as First Class Citizen: Systems are designed assuming components will fail, with built-in isolation and automated recovery mechanisms.

End-to-End Visibility: Complete observability from data ingestion through model serving enables rapid identification and resolution of issues.

Causal Thinking: Moving beyond correlation to understand causal impact ensures ML systems deliver real value.

Science Centric Culture: Empowering data scientists with production-grade tools reduces friction between experimentation and deployment.

Netflix’s implementation shows how reliability at scale requires infrastructure, testing, and operational practices alongside algorithms. Their approach combines real-time data processing, experimentation platforms, and observability tools to maintain system reliability.

References

Netflix Technology Blog. (2021). “It’s All A/Bout Testing: The Netflix Experimentation Platform.” https://netflixtechblog.com/its-all-a-bout-testing-the-netflix-experimentation-platform-4e1ca458c15

Netflix Technology Blog. (2024). “Supporting Diverse ML Systems at Netflix.” https://netflixtechblog.com/supporting-diverse-ml-systems-at-netflix-2d2e6b6d205d

Netflix Technology Blog. (2018). “Keystone Real-time Stream Processing Platform.” https://netflixtechblog.com/keystone-real-time-stream-processing-platform-a3ee651812a

GitHub - Netflix/metaflow. “Build, Manage and Deploy AI/ML Systems.” https://github.com/Netflix/metaflow

Diamantopoulos, N., et al. (2019). “Engineering for a Science-Centric Experimentation Platform.” arXiv:1910.03878. https://arxiv.org/abs/1910.03878

Netflix Technology Blog. (2022). “A Survey of Causal Inference Applications at Netflix.” https://netflixtechblog.com/a-survey-of-causal-inference-applications-at-netflix-b62d25175e6f

Netflix Technology Blog. (2024). “Causal Machine Learning for Creative Insights.” https://netflixtechblog.com/causal-machine-learning-for-creative-insights-4b0ce22a8a96

Netflix Technology Blog. (2018). “A/B Testing and Beyond: Improving the Netflix Streaming Experience with Experimentation and Data Science.” https://netflixtechblog.com/a-b-testing-and-beyond-improving-the-netflix-streaming-experience-with-experimentation-and-data-5b0ae9295bdf

Valohai. “Building Machine Learning Infrastructure at Netflix - Interview with Ville Tuulos.” https://valohai.com/blog/building-machine-learning-infrastructure-at-netflix/

Enterprises rework log analytics to cut observability costs. TechTarget. https://www.techtarget.com/searchitoperations/feature/Enterprises-rework-log-analytics-to-cut-observability-costs

InfoQ. (2018). “Netflix Keystone Real-Time Stream Processing Platform.” https://www.infoq.com/news/2018/09/Netflix-Keystone-Real-Time-Proc/

Xu, Z. (2022). “The Four Innovation Phases of Netflix’s Trillions Scale Real-time Data Infrastructure.” Medium. https://zhenzhongxu.com/the-four-innovation-phases-of-netflixs-trillions-scale-real-time-data-infrastructure-2370938d7f01

Uttamchandani, S. (2020). “Lessons learned from Netflix Keystone Pipeline handling trillions of daily messages.” QuickBooks Engineering. https://quickbooks-engineering.intuit.com/lessons-learnt-from-netflix-keystone-pipeline-with-trillions-of-daily-messages-64cc91b3c8ea

Netflix Technology Blog. (2022). “Orchestrating Data/ML Workflows at Scale With Netflix Maestro.” https://netflixtechblog.com/orchestrating-data-ml-workflows-at-scale-with-netflix-maestro-aaa2b41b800c

Netflix Technology Blog. (2019). “Open-Sourcing Metaflow, a Human-Centric Framework for Data Science.” https://netflixtechblog.com/open-sourcing-metaflow-a-human-centric-framework-for-data-science-fa72e04a5d9

I remember reading about thir A/B testing on their blog and was so mesmerized.

this is a good engineering deep dive and their architecture is outstanding.

This is the kind of engineering discipline I want to see more of in the AI space (and among growth marketers). Not just building things that work once, but building things that keep working when reality gets messy.